Understanding and Mitigating Bias in Large Language Models (LLMs)

.avif)

.avif)

If you've been following the developments in technology, you've likely encountered the term "Large Language Models (LLMs)" gaining traction. LLMs are currently at the forefront of the artificial intelligence (AI) landscape, driving the generative AI revolution by mastering human languages, exemplified by models like ChatGPT and Bard.

Their ability to replicate human conversations through advanced natural language processing (NLP) systems has positioned LLMs as key players in today's dynamic market. However, like any technology, AI-powered assistants face unique challenges, with one significant concern being the potential for bias embedded within the data used to train these models.

The Prediction and Language Generation Process in LLMs

LLMs utilize Transformer models, a deep learning framework that comprehends context and sequences via data analysis.

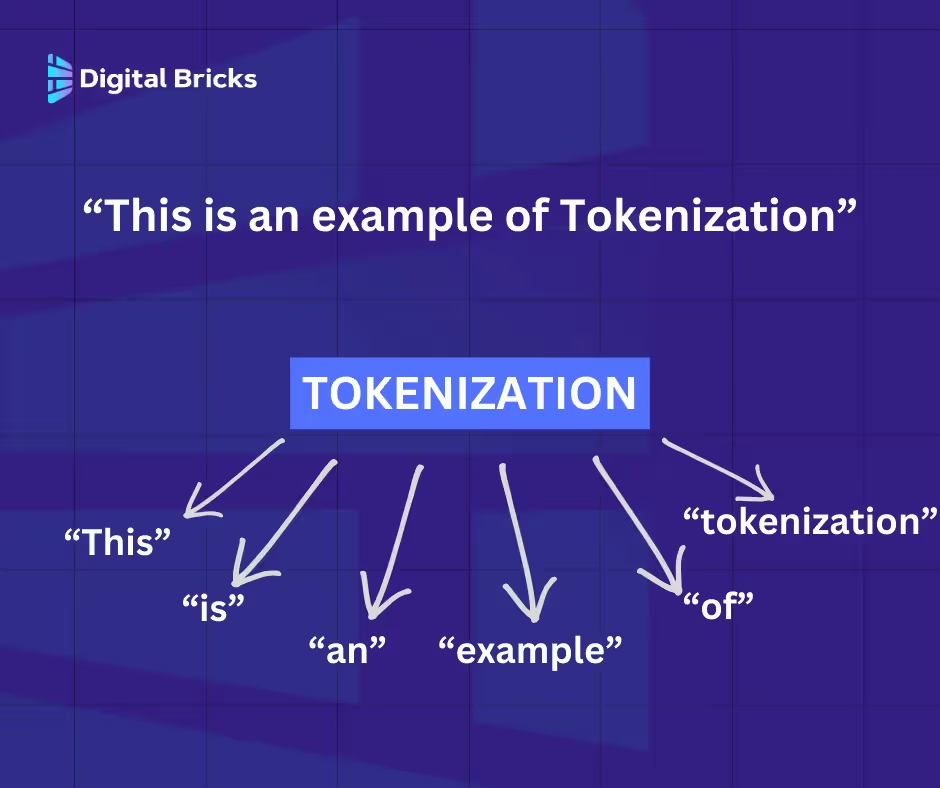

Tokenization involves breaking down input text into tokens, enabling the model to analyze and process them through mathematical equations to uncover relationships. This process employs a probabilistic method to predict the subsequent word sequences during the model's training phase.

The training phase consists of inputting the model with massive sets of text data to help the model understand various linguistic contexts, nuances, and styles. LLMs will create a knowledge base in which they can effectively mimic the human language.

LLMs' remarkable versatility and language comprehension underscore their advanced AI capabilities. Trained on vast datasets spanning diverse genres like legal documents and fictional narratives, LLMs demonstrate adaptability across various scenarios and contexts.

Beyond text prediction, LLMs exhibit versatility in handling tasks across languages, contexts, and outputs, evident in applications like customer service. This adaptability stems from extensive training on specific datasets and meticulous fine-tuning, enhancing effectiveness across diverse fields.

Nevertheless, it's essential to acknowledge LLMs' unique challenge: bias.

LLMs, drawing from a spectrum of text sources, absorb this data as their foundational knowledge, often interpreting it as truth. However, embedded biases and misinformation within these datasets can skew the LLM's outputs, perpetuating bias.

It's notable that a tool celebrated for its productivity enhancement now confronts ethical scrutiny. Learn more about AI ethics in our course; Ethical Horizons: Navigate AI with Integrity.

More data typically leads to better outcomes. However, if the training data used for LLMs includes biased or unrepresentative samples, the model will inevitably learn and perpetuate these biases. Examples of LLM bias include gender, race, and cultural bias.

For instance, if the majority of data portrays women as cleaners or nurses and men as engineers or CEOs, LLMs may reflect societal stereotypes. Similarly, racial bias may cause LLMs to reinforce certain ethnic stereotypes, contributing to cultural bias.

The primary sources of bias in LLMs stem from:

Despite the versatility of LLMs, they may struggle with multicultural content, highlighting a significant challenge. Ethical concerns arise from the potential use of LLMs in decision-making processes. Learn more about these issues in our course.

Bias in LLMs has significant repercussions for both users and society at large.

Reinforcement of stereotypes

Biases in LLM training data perpetuate harmful stereotypes related to culture and gender. This reinforcement sustains societal prejudices, hindering progress.

Continued ingestion of biased data by LLMs exacerbates cultural divides and gender disparities.

Discrimination

Discrimination, based on sex, ethnicity, age, or disability, arises from underrepresented training data that fails to accurately reflect diverse groups.

LLM outputs containing biased responses perpetuate racial, gender, and age discrimination, affecting marginalized communities' daily lives, from hiring processes to educational opportunities. This lack of diversity and inclusivity in LLM outputs raises ethical concerns regarding their use in decision-making processes.

Misinformation and disinformation

Concerns about biased or unrepresentative training data raise questions about its accuracy. Dissemination of misinformation or disinformation through LLMs can have grave consequences.

For instance, healthcare decisions based on LLMs with biased information can be perilous. Similarly, politically biased LLMs may propagate narratives leading to political disinformation.

Trust

Ethical concerns surrounding LLMs contribute to societal apprehension toward AI system implementation in everyday life. Concerns about job loss and economic instability further erode trust in AI systems.

Existing distrust in AI systems coupled with LLM biases can completely undermine societal confidence. To gain societal acceptance, LLM technology must earn trust.

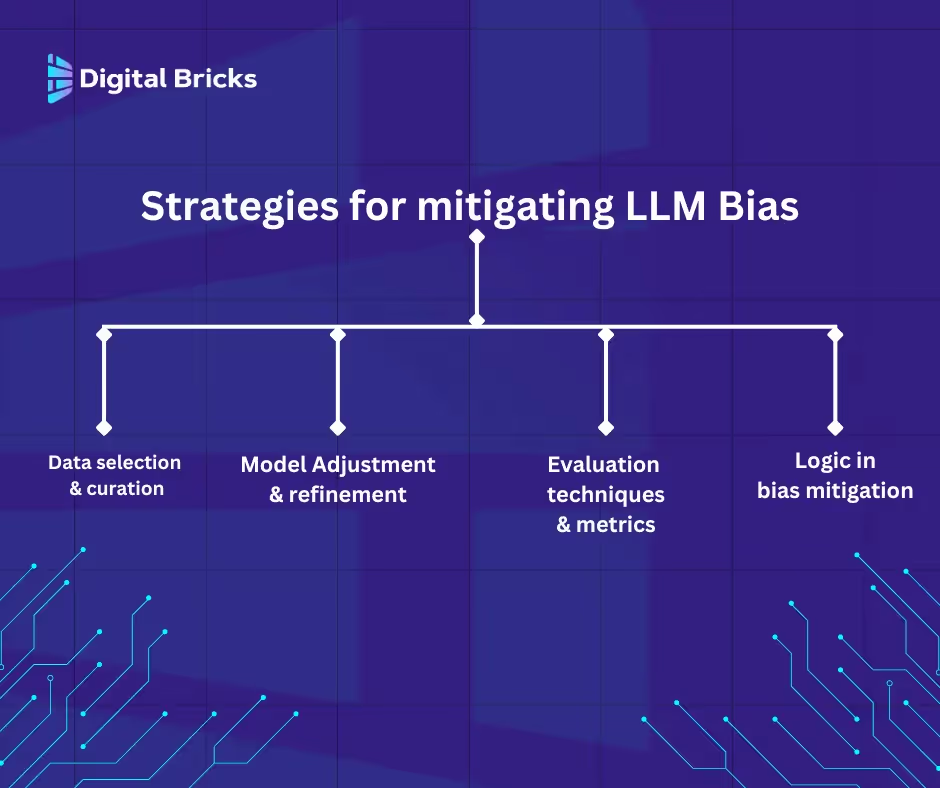

Data Selection and Curation

Initiating from the foundation, let's address the data aspect. Companies bear a significant responsibility for the data they feed into models.

Ensuring diversity in the training data utilized for LLMs is critical. Drawing from varied demographics, languages, and cultures balances the representation of human language. This practice safeguards against unrepresentative samples and informs targeted model fine-tuning efforts, pivotal in mitigating bias when applied at scale.

Model Adjustment and Refinement

Following the collation of diverse data sources, organizations can refine accuracy and minimize biases through model fine-tuning. Various approaches facilitate this:

Evaluation Techniques and Metrics

To foster safe integration of AI systems into society, organizations must employ diverse evaluation methods and metrics. Before widespread deployment, it's imperative to implement comprehensive evaluation methods capturing various dimensions of bias in LLM outputs.

Methods include human, automatic, or hybrid evaluation, all aimed at detecting, estimating, or filtering biases. Metrics such as accuracy, sentiment, and fairness offer insights into bias in LLM outputs, facilitating continuous improvement.

Logic in Bias Mitigation

Recent research from MIT's CSAIL suggests integrating logical reasoning into LLMs to address bias: Large language models biased. Can logic help save them?

Incorporating logical and structured thinking enables LLMs to process and generate outputs with sound reasoning and critical thinking, leading to more accurate responses.

The approach involves constructing a neutral language model, where token relationships are considered 'neutral.' Training models using this method yielded less biased outputs without requiring additional data or algorithmic adjustments.

Logic-aware language models possess the capacity to circumvent harmful stereotypes, fostering more equitable outcomes.

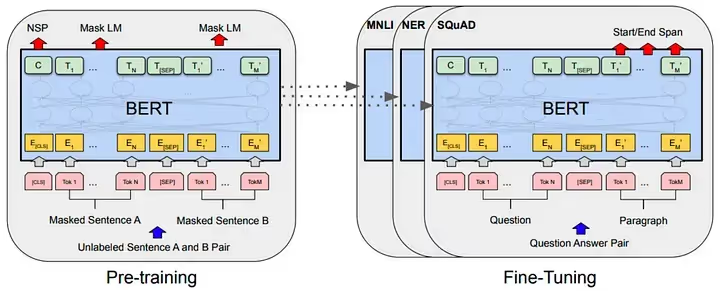

Expanding Training Data for Enhanced LLM Performance: Google's Approach with BERT

Google Research enhances its LLM BERT by broadening its training data to ensure inclusivity and diversity. Utilizing extensive datasets with unannotated text during pre-training enables the model to adapt to various tasks during fine-tuning. The objective is to develop a less biased LLM capable of generating more resilient outputs. Google Research reports a decrease in stereotypical outputs and ongoing enhancements in understanding diverse dialects and cultural nuances.

Balancing competing objectives is often daunting. This rings true when seeking equilibrium between mitigating LLM bias while upholding or even elevating the model's performance. Debiasing models are pivotal for ensuring fairness. Yet, we cannot afford to compromise the model's accuracy and efficacy.

A strategic blueprint is indispensable to ensure that bias mitigation methods like data curation, model fine-tuning, and employing diverse techniques do not hinder the model's proficiency in comprehending and generating language outputs. It's a journey of refinement and adaptation, where debiasing and enhancement must coexist without compromising performance.

In this pursuit, it's about constant refinement, vigilant monitoring, and iterative improvements.

To learn more, check out our AI Mastery course, which covers how these powerful tools are reshaping the AI landscape.