How Microsoft Partners Can Turn AI Disruption into AI Opportunities

.png)

.png)

Since the start of 2026, the AI market has shifted from headline-grabbing experimentation to something more structural: a battle over platforms, infrastructure, and who controls enterprise workloads end to end.

For CIOs and the partner channel this simply means that AI is no longer only a feature discussion. It is becoming a foundational layer of enterprise computing, and it is forcing new decisions about standards, governance, security, and operating models.

Recent developments make the shift hard to ignore, so let's examine each one.

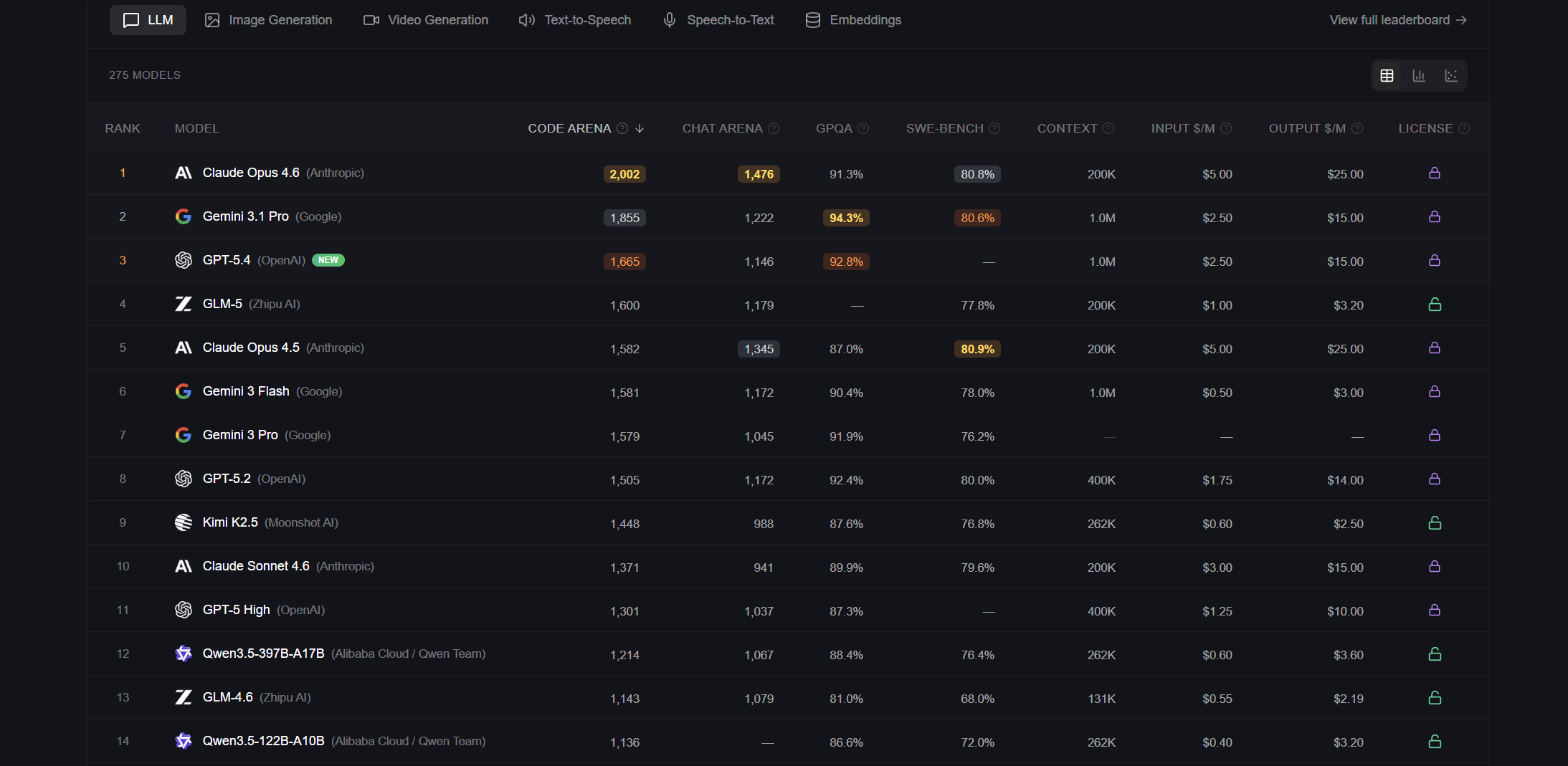

The pace of frontier model releases is now measured in weeks, not years. On February 5, 2026, OpenAI released GPT‑5.3‑Codex, positioning it as a step forward in agentic capability with availability across Codex surfaces such as the app, CLI, IDE extension, and web. That same day, Anthropic released Claude Opus 4.6, available via its API and major cloud platforms. A couple of weeks later, Google DeepMind advanced the Gemini 3 series again, shipping Gemini 3.1 Pro across the Gemini API, Vertex AI, the Gemini app, and NotebookLM.

What matters for enterprise buyers is not just the raw capability curve. It is the cadence. When core models meaningfully shift multiple times inside a single quarter, customers face a new planning problem: how do you standardize architecture, governance, and skills when the baseline keeps moving?

That is why “model wars” quickly become “platform wars.” The leading vendors are building vertically integrated stacks that combine foundation models with developer surfaces, agent tooling, security controls, and distribution through enterprise products.

OpenAI is explicitly productizing agent workflows through Codex tooling and positioning models as collaborators that execute multi-step tasks on a computer.

Google DeepMind is shipping Gemini models directly into consumer and enterprise channels, including Search and developer platforms, rather than treating the model as a standalone API.

Anthropic is pushing model availability across clouds and wrapping the technology with enterprise-facing programs that accelerate adoption.

For Microsoft partners, this shift changes how customers buy. Many organizations are moving from isolated pilots to selecting a primary AI platform posture, similar to how they once standardized on a primary cloud approach. That does not mean “one model forever.” It means one operating model for identity, security, data access, monitoring, and change management, with model choice managed inside that boundary.

The strongest signal in the channel right now is licensing and control, not a single model benchmark.

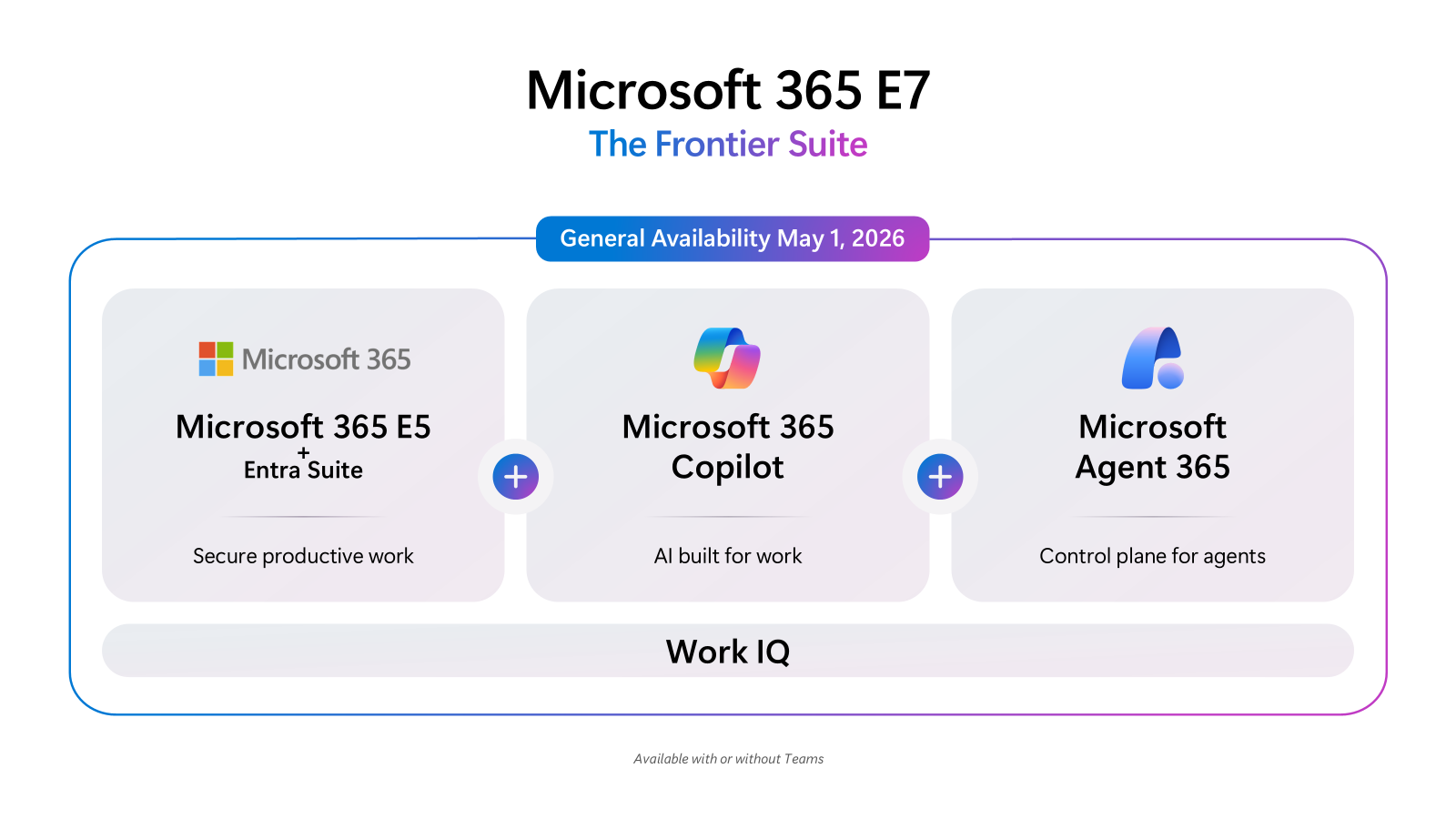

On March 9, 2026, Microsoft announced Microsoft 365 E7: The Frontier Suite, positioned as a single suite that unifies Microsoft 365 E5, Microsoft 365 Copilot, and the new agent control plane called Agent 365, plus the Microsoft Entra Suite and additional security capabilities across Defender, Intune, and Purview. Microsoft set general availability for May 1, 2026, with a stated retail price of $99 per user per month.

This is not just packaging. It signals how AI will be sold into the enterprise: embedded into the productivity stack, tied to identity and security, and governed as a first-class workload. Microsoft explicitly frames Agent 365 as the control plane to observe, govern, manage, and secure agents across the organization.

First, Microsoft is expanding the definition of the “AI install base.” Microsoft 365 Copilot Chat is positioned as available at no additional cost for Microsoft Entra account users with eligible Microsoft 365 subscriptions, while agent usage is metered and requires the right underlying subscriptions and, in some cases, Azure-related consumption. In practical terms, more users will have an entry point to AI, but value creation will concentrate around integrating, governing, and operationalizing agents and Copilot experiences.

Second, Microsoft is emphasizing “enterprise control” as a product primitive. Agent 365 is described as enforcing least-privilege access and providing logging, reporting, and audit trails for agent actions and security events. This aligns with where analysts see the market heading: secure, scalable AI foundations and AI security platforms that centralize visibility and enforce usage policies.

For the channel, this changes the shape of the revenue opportunity. A large Microsoft partner ecosystem already exists, and Microsoft has described it as about 500,000 partners and growing. Microsoft also highlights IDC analysis showing that partner economics are heavily services- and IP-driven, with services partners earning multiples on top of Microsoft revenue.

That context matters because E7 pushes partners into a higher-value role: not “license + deployment,” but AI transformation partner. Customers will need help redesigning workflows, operationalizing agent governance, shaping adoption programs, and proving outcomes. McKinsey’s survey research aligns with that reality: scaling AI is still a work in progress for most organizations, and meaningful value correlates with deliberate workflow redesign and strong operating practices.

Microsoft’s own organizational moves reinforce the direction. Microsoft reported that it is reorganizing Copilot teams by unifying commercial and consumer versions, while shifting leadership focus toward building new AI models. That is what a platform company does when it believes the next interface layer is being defined.

The Dynamics ecosystem is not a side story in this shift. It is one of the most operationally valuable places to apply agents because it sits on top of revenue, service delivery, finance, and supply chain processes.

Microsoft’s own product messaging is explicit: Dynamics 365 “brings AI to the core of your business,” combining agents, Copilot experiences, and built-in AI across ERP and CRM. It also emphasizes that many Dynamics 365 apps share a platform foundation with model-driven apps and run on Dataverse, which is part of why AI capabilities can be delivered consistently across apps.

In Dynamics 365 Sales, Copilot provides a chat interface that can summarize opportunities and leads, help sellers catch up on record changes, prepare for meetings, and pull relevant account news, while restricting visibility to what the signed-in user can access. In Dynamics 365 Customer Service, Copilot features can help representatives respond to questions, compose emails, draft chat responses, and summarize cases and conversations, although some features vary by region and rollout status.

This creates a very specific partner opportunity: Dynamics AI is not just about “better text generation.” It is about connecting Copilot and agents to business processes that already have owners, KPIs, audit requirements, and compliance constraints. That is where partners can win by packaging repeatable offerings around data readiness, role-based copilots, and “human-in-the-loop” workflows that align with governance.

A year ago, many AI labs still operated like research-first organizations. That posture is quickly fading as enterprise distribution becomes the competitive battleground.

Anthropic announced the Claude Partner Network and committed an initial $100 million to support partners through training, technical support, and joint market development. It also introduced a technical certification track and described scaling its partner-facing team to provide applied AI engineers and solution architecture support.

Two additional points in that announcement are worth reading as strategic signals:

Anthropic positions Claude as available across “all three leading cloud providers,” naming Amazon Web Services, Google Cloud, and Microsoft, which is a deliberate appeal to enterprises that want portability and procurement flexibility. And Anthropic explicitly frames partners as the guides who help enterprises navigate deployment requirements, compliance, and change management.

For Microsoft partners, this validates a broader reality: being “good at AI” will increasingly mean being good at the channel motions the enterprise already trusts. That includes certifications, reference architectures, industry accelerators, security baselines, and post-deployment managed services.

It also opens a practical frontier for partners: building industry-specific apps and automations on top of foundation models and agent tooling. When models improve monthly, differentiation shifts to domain context, process integration, and governance. That is partner territory, especially in Dynamics-heavy environments where value lives in workflows and data quality, not chat quality.

As AI systems move from experimentation into production, the legal surface area is expanding in parallel. On March 16, 2026, Encyclopedia Britannica sued OpenAI in Manhattan federal court, alleging unauthorized use of copyrighted content to train and support model outputs. This follows a pattern: publishers, media organizations, and knowledge platforms are increasingly challenging training data practices and the market impact of AI-generated substitutes.

The legal uncertainty is not abstract. Reuters’ analysis of 2025 decisions shows U.S. courts beginning to issue substantive rulings on whether AI training is fair use, with early guideposts that emphasize lawful data sourcing and treat piracy as a major risk factor. At the same time, cases involving direct competition with news content, such as The New York Times litigation, are framed as potentially harder tests for fair use because they implicate active licensing markets and substitution risk.

In Europe, the debate is moving faster and in a more prescriptive direction.Earlier this month, the European Parliament called for stricter enforcement of copyright in AI training, including proposals for mandatory disclosure of training data sources and even a central register of copyrighted works used by models. At the same time, the AI Act’s obligations around transparency for general-purpose AI models are beginning to take effect, requiring providers to publish summaries of their training data and demonstrate compliance with copyright law.

For enterprises deploying AI tools, the operational question is becoming unavoidable: who is responsible when an AI system produces infringing, confidential, or otherwise problematic content. Even if the courts take years to settle precedent, procurement and governance cannot wait. This is why Microsoft is concentrating on agent observability, policy, and auditability as core product capabilities.

Taken together, these shifts point in one direction. AI is no longer a fast-moving feature trend. It is hardening into core economic infrastructure that shapes how software is built, how organizations operate, and how digital markets compete.

For Microsoft partners, the opportunity is significant, but it is not passive. Microsoft 365 E7 and Agent 365 are signals that the next wave of enterprise value will be built on three disciplines: workflow redesign, governed agent deployment, and security architecture that treats AI like a first-class identity and risk domain.

Dynamics-focused partners have an additional advantage: you already sit closest to the processes where AI can produce measurable outcomes. The winners will be the partners who can connect Copilot and agents to clean data, well-defined roles, and real operational metrics across sales, service, finance, and supply chain.