Understanding the Agentic Reasoning Loop

Artificial intelligence is entering a new phase, one that is less defined by model performance and more by behaviour. For years, progress has been measured in how well systems respond. Now, the shift is towards how systems operate. At the centre of this change is the agentic reasoning loop, a concept that is quietly redefining what AI can actually do inside an organisation.

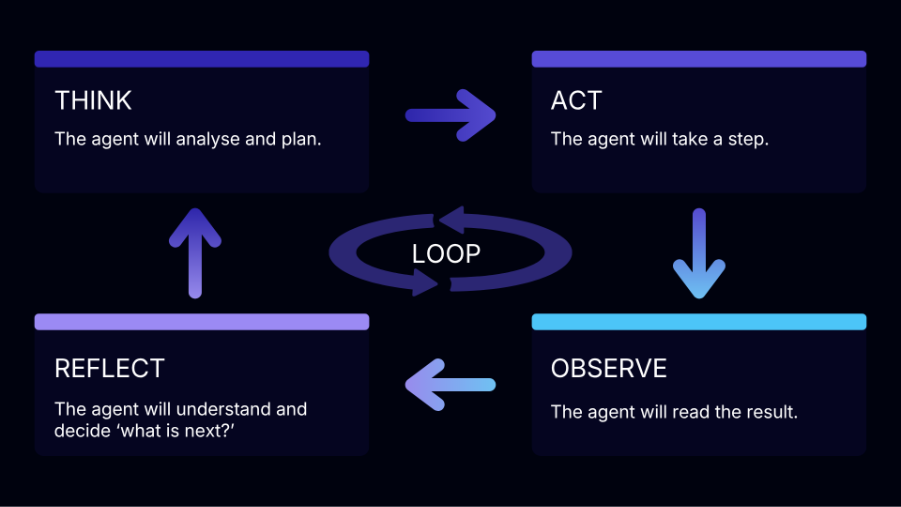

A reasoning loop describes a cyclical process where an AI agent alternates between thinking and acting. Instead of producing a single response, the agent plans a step, executes it, observes the result, and then decides what to do next. This sequence repeats, continuously refining its approach based on real outcomes rather than assumptions. What sounds simple in structure is profound in impact. It turns AI from something that answers into something that works.

.png)

Most AI systems today still operate in a one-shot paradigm. A user provides an input, and the system generates an output. Even when that output is sophisticated, the interaction ends there. There is no validation, no iteration, and no opportunity for the system to adapt once the response has been delivered.

The agentic reasoning loop changes this entirely.

Instead of aiming to be correct in a single pass, an agent works towards an outcome over time. It can break down problems, test approaches, and refine its strategy as it progresses. Each iteration improves the next decision, grounding the agent in what has actually happened rather than what it predicts might happen. This is the point where AI transitions from being reactive to becoming operational.

At the heart of the reasoning loop are four repeating phases: think, act, observe, and reflect.

The agent begins by analysing the task and planning its next step. It then takes an action, often through a tool or system. That action produces a result, which the agent observes and evaluates. Finally, it reflects on that outcome and determines how to proceed.

This loop continues until a clear termination condition is met, such as achieving the goal, reaching a defined limit, or encountering an unrecoverable error. The power lies in the continuity. Each cycle is grounded in real observations, which significantly improves performance on longer and more complex tasks.

One of the most important characteristics of the reasoning loop is how it connects reasoning to real-world interaction. Patterns such as ReAct interleave thought and action, ensuring that decisions are continuously validated through observable outcomes.

Rather than relying purely on internal logic, the agent actively engages with its environment. It reads files, queries systems, executes code, and interprets results before moving forward. This reduces the likelihood of hallucinations and ensures that each step is anchored in something verifiable.

In practice, this means the agent is not just thinking about the problem. It is working through it.

Tools are what extend the reasoning loop into the real world. Every tool call represents an action, and every action produces an observation that feeds back into the loop. This is what transforms a language model into an agent.

Modern agents can interact with a wide range of tools, from simple read and write operations to complex integrations with enterprise systems, APIs, and communication platforms. This allows them to operate across workflows rather than being confined to a single interface.

The implication is significant. AI is no longer limited to assisting individual tasks. It can begin to orchestrate entire processes.

As agents become more capable, understanding their behaviour becomes essential. The reasoning loop introduces a level of observability that has not previously existed in AI systems.

Because each step is explicit, organisations can trace how a decision was made, what actions were taken, and what information was used. This creates a transparent chain of reasoning that can be inspected, validated, and improved over time.

This is not just a technical advantage. It is a requirement for trust. Without visibility, AI remains difficult to govern. With it, organisations can begin to scale AI with confidence.

Recent developments in Copilot Studio are accelerating the practical application of reasoning loops in enterprise environments.

With enhanced task completion, agents are no longer restricted to predefined flows. They can dynamically plan tasks, ask clarifying questions, chain multiple tools together, and adapt their behaviour as new information emerges.

This represents a clear shift from automation to autonomy. Instead of mapping every possible path upfront, organisations can define objectives and allow agents to determine how those objectives are achieved in real time.

The reasoning loop becomes the engine behind this capability.

While the concept is powerful, designing effective reasoning loops requires care. Without clear termination conditions, agents can run indefinitely. Without reflection, errors can compound across steps. Without structured context management, important information can be lost over time.

These are not edge cases. They are fundamental design considerations.

Building successful agents therefore requires more than connecting a model to tools. It involves structuring the loop, defining boundaries, and ensuring that the system remains both effective and controllable.

The agentic reasoning loop is not just a technical pattern. It represents a new way of structuring work.

Instead of rigid workflows and predefined processes, organisations can begin to define goals and allow intelligent systems to determine how those goals are achieved. Human expertise remains central, but it is augmented by systems that can reason, act, and adapt continuously.

This is where the real transformation lies. The organisations that understand and implement reasoning loops effectively will not simply use AI more efficiently. They will operate differently. And in that shift, a new competitive advantage begins to emerge.